Visualisation

Augmented spectator sports experience

The way how we consume media and in particular experience sports entertainment has seen some dramatical changes in recent years. While in the past, sport events were mostly followed by spectators in television or on-site, nowadays spectators can remotely follow the same events live in real-time or as replays using their mobile devices. However, there is a still a gap between what spectators experience at live sporting events and the online content. On-site spectators often miss out on the enriched content that is available to remote viewers. The goal of our research is to explore new ways of how to experience live sport events using Visual Computing. In particular, we are interested in using Augmented Reality to place event statistic such as scoring, penalties, team statistics, and additional player information into the field of view of the spectators based on their location within the venue. This aim comes with major challenges for localisation and tracking as well as for visualisation. More ...

Situated Visualisation of GIS Data

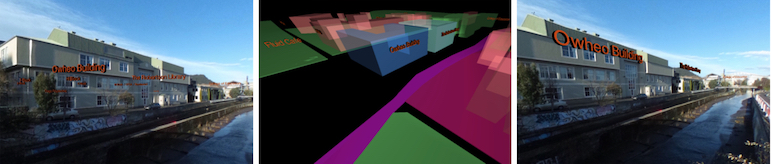

Situated Visualisation of data from geographic information systems (GIS) is exposed to a set of problems, such as limited visibility, legibility, information clutter and the limited understanding of spatial relationships. In this work, we address the challenges of visibility, information clutter and understanding of spatial relationships with a set of dynamic Situated Visualization techniques that address the special needs of Situated Visualization of GIS data in particular for street-view-like perspectives as used for many navigation applications. The proposed techniques use GIS data as input for providing dynamic annotation placement, dynamic label alignment and occlusion culling.

Image-based X-ray visualization

Within this work, we evaluated different state-of-the-art approaches for implementing an X-ray view in Augmented Reality (AR). Our focus is on approaches supporting a better scene understanding and in particular a better sense of depth order between physical objects and digital objects. One of the main goals of this work is to provide effective X-ray visualization techniques that work in unprepared outdoor environments. In order to achieve this goal, we focused on methods that automatically extract depth cues from video images. The extracted depth cues are combined in ghosting maps that are used to assign each video image pixel a trans- parency value to control the overlay in the AR view. Within our study, we analyze three different types of ghosting maps, 1) alpha-blending which uses a uniform alpha value within the ghosting map, 2) edge-based ghosting which is based on edge extraction and 3) image-based ghosting which incorpo- rates perceptual grouping, saliency information, edges and texture details. Our study results demonstrate that the lat- ter technique helps the user to understand the subsurface location of virtual objects better than using alpha-blending or the edge-based ghosting.